A new version of the platform will be available soon —stay tuned! 🚀

How to Use the GEO Metrics MCP to Understand Your AI Positioning

Connect GEO Metrics to Claude, ChatGPT and Perplexity via MCP. Analyze your brand's AI visibility in real time. Step-by-step guide with prompts and a real use case.

Your brand can rank on page one of Google and not exist for ChatGPT. That's already happening. And most brands have no idea, because nobody has measured the gap.

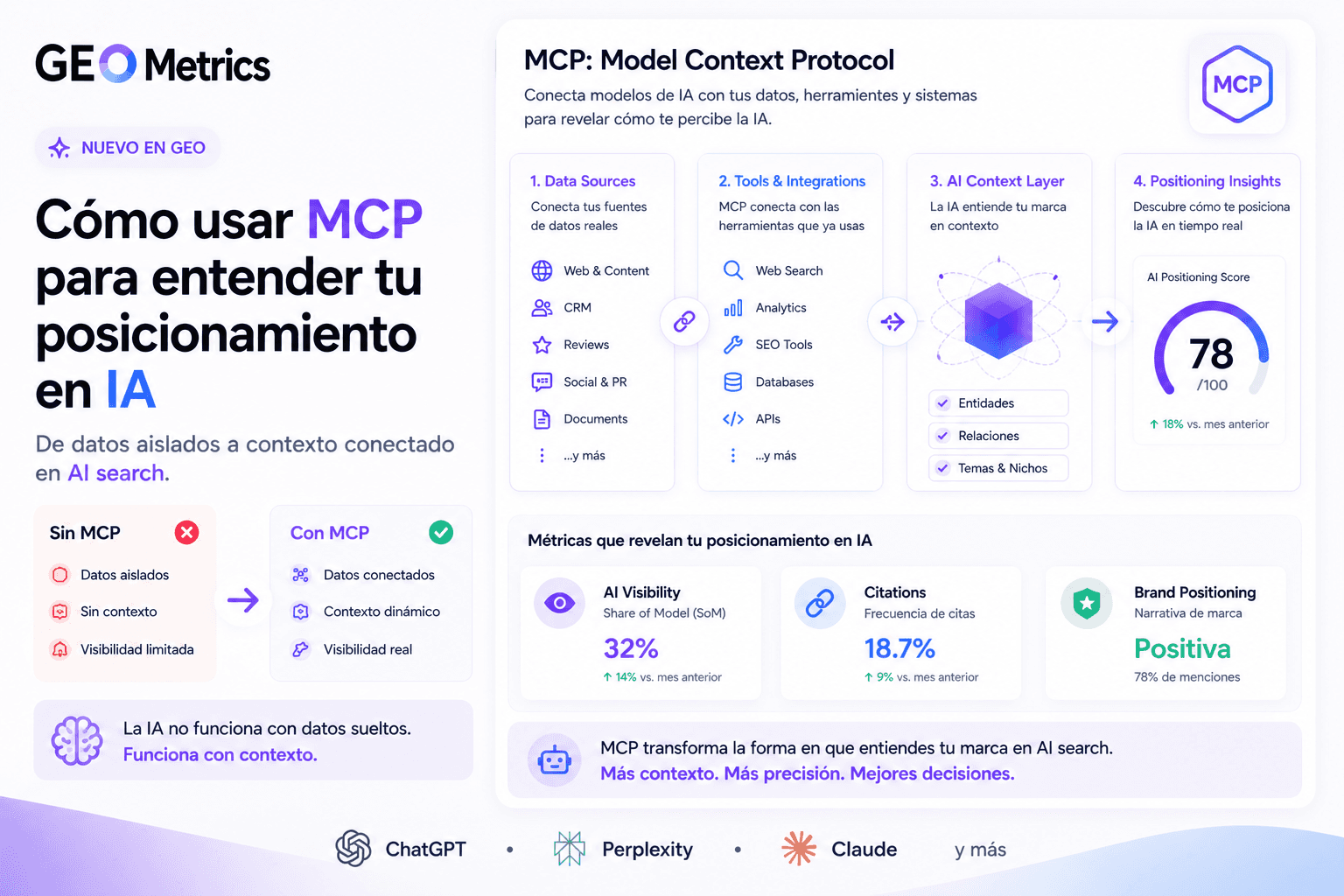

The GEO Metrics Model Context Protocol (MCP) changes that. With a single connection, Claude, ChatGPT and Perplexity can read your account's visibility data in real time: Share of Model, AI-cited domains, average position by engine and competitive gaps. No exports. No intermediate dashboards.

This guide explains how to connect the MCP, what analyses you can run from day one, and how one team used this data to design a Reddit strategy that moved their rankings in Grok and Perplexity in under 30 days.

TL;DR

✓ What it is: The GEO Metrics MCP connects your AI (Claude, ChatGPT, Perplexity) directly to your brand's visibility data. No exports, no copy-paste.

✓ What you can do: Ask your AI for Share of Model summaries, competitor domain analysis, engine-specific gaps and content strategy — all from a single conversation.

✓ Real result: One team identified Reddit as the dominant citation source (37 citations in 30 days on a key prompt) and built a strategy that opened new mentions in Grok and Copilot within 4 weeks.

✓ Setup time: Under 5 minutes. Paste a URL, authenticate, done.

✓ Best engine for deep analysis: Claude — smoothest MCP integration, richest metadata access.

Claude vs ChatGPT vs Perplexity: MCP compatibility comparison

The MCP standard works across all three engines, but the experience is not identical. Here is how they compare on the dimensions that matter most for AI positioning analysis:

Claude | ChatGPT | Perplexity | |

MCP standard support | ✔ Native (developed by Anthropic) | ✔ Via Connectors panel | ✔ Via PerplexityXPC desktop app |

Setup complexity | Low — Settings → Integrations | Low — Settings → Connectors | Medium — requires desktop app install |

Mobile support | ✔ Yes (iOS & Android) | ✔ Yes (iOS & Android) | ✘ Desktop app required for MCP |

Response metadata access | Full (response_preview, citations, positions) | Partial (core metrics available) | Partial (combined with live search context) |

Deep data analysis | ★★★★★ Best in class | ★★★★☆ Strong | ★★★☆☆ Good for quick queries |

Cross-project analysis | ✔ Full multi-project support | ✔ Supported | ⚠ Limited by context window |

Conversation memory | None — re-read project each session | None — re-read project each session | None — re-read project each session |

Best for | Strategic analysis, content strategy, competitive diagnosis | Quick queries, team workflows, GPT Action automations | Research + AI data combined, real-time source validation |

GEO Metrics recommendation | ⭐ Primary engine for deep work | Secondary — great for daily check-ins | Secondary — useful for source discovery |

All three integrations use the same MCP server URL: https://api.geometrics.app/mcp. The data your brand sees is always scoped to your own account — no cross-account exposure regardless of which engine you use.

What is the MCP and why does it matter for your AI positioning?

The Model Context Protocol (MCP) is an open standard developed by Anthropic. It lets language models like Claude connect directly to external platforms and query live data during a conversation.

Before MCP, analyzing a brand's AI positioning meant logging into the platform, reviewing each project manually, exporting metrics, and loading them into another tool. It was slow and prone to losing context between steps.

With the GEO Metrics MCP active, the flow is different: you ask Claude what your Share of Model is for the last 30 days, and Claude queries your account on the spot, cross-references data across projects, and delivers the analysis. Same process with ChatGPT or Perplexity.

The MCP is not a complex technical integration. It's the way your AI stops working with generic training data and starts working with the real data from your brand.

How to connect the GEO Metrics MCP: step-by-step guide

The connection takes less than 5 minutes on any of the three engines. All you need is an active account at trygeometrics.com and access to Claude, ChatGPT or Perplexity.

Connecting GEO Metrics with Claude

Go to claude.ai and open the menu from the profile icon (top right corner).

Navigate to Settings → Integrations.

Click "Add integration" and paste the MCP server URL: https://api.geometrics.app/mcp

Authenticate with your GEO Metrics credentials.

Once connected, Claude automatically recognizes the available tools and uses them when context requires it.

Connecting GEO Metrics with ChatGPT

Go to chatgpt.com → Settings → Connectors.

Click "Add Connector" and paste the URL: https://api.geometrics.app/mcp

Select OAuth as the authentication method.

Log in to GEO Metrics and grant access. The tools will be available in all your ChatGPT conversations.

Connecting GEO Metrics with Perplexity

Perplexity requires one extra step: installing the PerplexityXPC desktop app.

Download and install PerplexityXPC from the Perplexity website.

Open Settings → Connectors in the desktop app.

Click "Add Connector" and paste the URL: https://api.geometrics.app/mcp

Complete the OAuth flow: you'll be redirected to GEO Metrics to log in and approve the connection.

Enable the connector under Sources. If it doesn't appear immediately, disable it, re-enable it, and restart Perplexity.

What analyses can you run with the MCP from day one?

Once connected, the AI has access to all the read tools in your account. These are the most commonly used analyses:

Project executive summary:

Share of Model, average position and the 3 critical action points from the last 30 days.

Period comparison:

Last 7 days vs. last 30 days performance. Trend detection.

Cited domain analysis:

Which sources the AI cites when answering your prompts and how many times. Essential for content strategy.

Gaps by AI engine:

Which engines (ChatGPT, Grok, Gemini, Perplexity, Copilot) show zero mentions for your brand, and whether the pattern repeats across all prompts or only specific ones.

Gap-based content strategy:

What content to create to close visibility gaps, based on the domains currently outranking you.

The MCP is read-only. Claude can analyze all your data, but it cannot create projects or change configurations. Those actions are done directly in the platform.

How to write prompts that generate actionable analyses

The MCP works best when you are specific about which project and which time period you care about. These are the prompt structures that produce the best results:

For a general overview:

"Look at the [project name] project. Give me an executive summary for the last 30 days: Share of Model, average position and the 3 most critical points that require action."

For period comparisons:

"Compare the performance of the [project name] project over the last 7 days versus the last 30. Is there any relevant trend?"

For competitive analysis:

"For all the prompts in the [project name] project, which domains are cited most often in the AI responses? Is there any domain that appears across all prompts simultaneously?"

For detecting engine gaps:

"Which AI engines gave us zero mentions this week? Is this a pattern that repeats across all prompts, or is it specific to certain ones?"

For content strategy:

"Based on the prompts where we don't appear and the domains that are outranking us, what type of content should we create to close those gaps?"

Real use case: a Reddit strategy built on GEO Metrics data

Reddit is not a secondary marketing channel. In the 2026 AI ecosystem, Reddit is the most cited source by AI models for subjective queries. When Perplexity, Grok or AI Overviews answer "what's the best tool for X?", they are reading Reddit discussions to build that answer.

GEO Metrics data quantifies this: on the prompt "best tools to improve visibility in ChatGPT" — one of the most strategic prompts analyzed — reddit.com accumulated 37 citations in 30 days. First place overall, ahead of YouTube (28 citations), SE Ranking (22) and Ahrefs (18).

If your brand doesn't appear in those Reddit discussions, the AI doesn't consider it a community-validated option — even if you rank well in organic search.

This is exactly the type of insight the MCP extracts automatically: not just that Reddit is relevant, but the exact citation count, the specific prompt where it happens, and the context to turn it into a concrete action.

Step 1: Diagnosis — reading the data with Claude via MCP

The conversation with Claude starts with a diagnostic prompt:

"Look at the top cited domains for the GEO Metrics project in the last 30 days. For the prompt 'best tools to improve visibility in ChatGPT', how dominant is Reddit and in what context are the AIs citing it?"

Claude queries get_ranking_prompt_details and returns: reddit.com with 37 citations in first place. Engines using it most: Grok (68 total sources per execution), ChatGPT (15–16 sources), Perplexity (9–10). Context: models look to Reddit for tool opinions because forums are considered harder to manipulate than corporate blogs.

The follow-up question to Claude: which specific subreddits should we be present in? Claude cross-references the response_preview data with its knowledge of the Reddit ecosystem and answers: r/SEO, r/marketing, r/DigitalMarketing, r/artificial, r/ChatGPT, r/LanguageModelOptimization.

Step 2: Identify threads with the highest citation potential

Not all Reddit threads carry the same weight for AI models. The models prioritize threads with these characteristics:

High upvote count (signal of community consensus).

Multiple different users mentioning the same tool.

Age combined with recent activity: the thread is still "alive".

Title using the exact terms the AI searches for when answering a query.

With the MCP, Claude analyzes the response_preview from the latest executions and detects the pattern: AI models primarily cite threads of the type "What's the best tool for X?" with comparative answers from real users. They do not cite promotional posts or generic listicles.

Step 3: Reddit participation strategy (weeks 1–8)

Level 1 — Organic presence (weeks 1–4)

The goal is to build a genuine participation history before mentioning the brand. AI models can distinguish between spam accounts and real contributors.

Create or activate an account with at least 30 days of history and positive karma before mentioning the brand.

Participate in r/SEO and r/marketing by answering general questions about GEO, LLMo and AEO — without mentioning the tool yet.

Tone must be informative and specific: data, examples, concrete results. Reddit penalizes generic marketing with downvotes, and AI models interpret that as a negative signal.

Level 2 — In-context mentions (weeks 3–6)

With credibility established, introduce mentions where they are genuinely useful. The formats AI models cite most often:

Replies to threads like "Looking for tools to track AI visibility" with personal experience and specific real-usage data.

Honest comparisons: mention the tool alongside competitors. Co-citation (appearing next to Ahrefs or Semrush) is a validation signal for the models.

Specific use cases with concrete results: not "the tool is great" but "I used GEO Metrics to detect we had zero mentions in Grok for this specific prompt, and here's how we fixed it".

Level 3 — Original strategic threads (weeks 5–8)

Create original threads designed to be cited. Titles with high AI citation probability:

"I tested 6 AI visibility tools for Spanish-speaking markets — here's what I found [with data]"

"Why your brand doesn't appear in ChatGPT even though it ranks on Google (and how to fix it)"

"Share of Model vs Share of Voice: the metric that actually matters in 2026"

Structure of each thread to maximize AI citability: direct question or statement in the title, concrete data in the first 3 paragraphs, tool comparison table (the format models extract most), mention of the tool with a specific attribute, and a community CTA to generate replies that amplify the thread.

Step 4: Measuring impact week by week with Claude + MCP

The cycle closes by measuring whether the strategy is moving the metrics. With the MCP, monitoring is weekly:

"Comparing the last 7 days with the 7 days before, did domain citations increase in the prompts where Reddit appears as the dominant source? Has any AI engine started citing us this week where it wasn't before?"

The metrics that indicate the strategy is working:

Increase in domain_citations on the prompts where reddit.com leads the sources.

Brand appearances in engines that previously showed zero mentions (Grok and Copilot are the most influenced by Reddit).

Improvement in avg_position on the same prompts where the strategy was executed.

If there is no movement after 4 weeks, Claude can help diagnose why: are the threads not getting upvotes? Is the AI's prompt semantically different from the title of your Reddit thread? Are competitors executing something that isn't covered in the current strategy?

The complete flow: from data to execution

Claude reads top_cited_domains via MCP → detects that reddit.com has 37 citations (dominant).

Claude analyzes response_preview → identifies what type of Reddit content the models are citing.

You design the strategy: subreddits, formats and timing.

You execute the participation strategy (4–8 weeks).

Claude monitors weekly via MCP whether domain citations are growing.

You adjust the strategy based on data feedback.

The MCP does not replace execution. Reddit requires genuine human participation: the models detect spam. What the MCP replaces is the manual analysis work. Instead of hours reviewing dashboards, you ask Claude a question and get the diagnosis in seconds.

Current limitations of the MCP integration

Read-only. Claude can read and analyze all your account data, but it cannot create projects, add prompts or change configurations. Those actions are done directly in the platform.

Context window. The integration returns the latest available executions. If a project has a low execution frequency, the data may not reflect the most recent state of the engines.

One account per integration. At the moment, the integration links one GEO Metrics account. If you manage multiple accounts (as an agency), specify the brand or project you want to work with in the prompt.

Frequently asked questions about the GEO Metrics MCP

Does Claude remember data between conversations?

No. Every conversation starts from scratch. To maintain context between sessions, the recommended practice is to always start with: "Connect to GEO Metrics and read the [project name] project before responding."

Does the MCP work in the Claude mobile app?

Yes. The MCP integration works across all versions of Claude where you have integrations configured, including the mobile app.

Is the data real-time?

Claude queries the data at the moment you ask. GEO Metrics pulls data from AI engines every 24 hours. In practice, the data you see is from the same day.

Can I use the MCP with AI tools other than Claude?

Yes. The MCP standard is supported by ChatGPT and Perplexity. The connection process varies slightly on each platform: see the detailed steps in the section above.

What is the difference between using the MCP with Claude vs. ChatGPT?

Claude has the smoothest integration, since MCP is a standard developed by Anthropic. ChatGPT and Perplexity also support it, but access to certain response metadata may vary across platforms. For deep data analysis, Claude is the recommended option.

Do I need technical knowledge to use the MCP?

No. The connection requires pasting a URL and authenticating with your GEO Metrics credentials. There is no additional technical setup. If you prefer a guided demo, the GEO Metrics team offers one at no cost.

What if my competitors also use the GEO Metrics MCP?

Each account can only access its own data. The GEO Metrics MCP does not expose data from other projects or accounts. What you can do is monitor the domains the AI cites when answering your prompts — which gives you indirect visibility into what sources your competitors are using to position themselves.

Start measuring your AI positioning today

The only way to know whether your brand appears when AI answers your customers' questions is to measure it. GEO Metrics gives you that data. The MCP lets you analyze it without leaving your AI conversation.

Activate your account at geometrics.app/register and connect the MCP in under 5 minutes.

GEO & AEO expert focused on making brands visible inside AI-generated answers. He leads GEO Metrics, measuring how models like ChatGPT and Gemini cite, rank, and describe brands. His work helps companies move from SEO rankings to true visibility in AI-driven search.

See more articles

Learn actionable strategies, proven workflows, and expert tips to help your brand thrive.

Subscribe to GEO Metrics newsletter!

Receive expert advice, updates, and smart analytical insights directly in your inbox.