A new version of the platform will be available soon —stay tuned! 🚀

Which prompt to track? The Real Problem with measuring AI Visibility

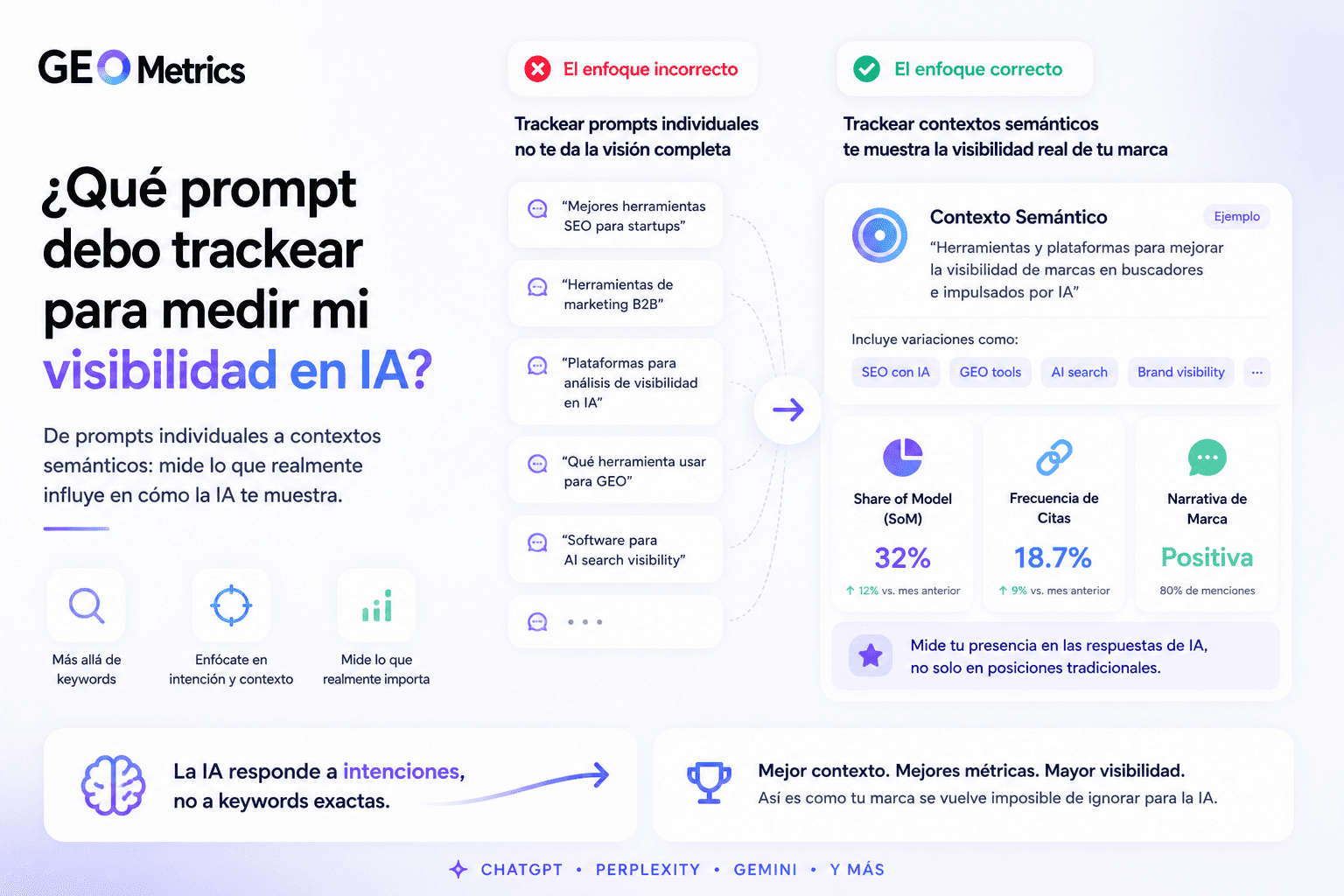

Tracking literal prompts in ChatGPT or Gemini doesn't work. Every user asks differently. The solution isn't more prompts — it's measuring contexts. This is how GEO really works.

TL;DR

Nobody asks an AI the same way. Tracking a literal prompt gives you data about that prompt — not about your actual visibility.

The problem isn't finding "the right prompt." It's that the right prompt doesn't exist: there are thousands of semantic variations for the same intent.

LLMs don't respond to words — they respond to contexts. That changes everything about what you need to measure.

If you're clear on your thematic contexts, you don't need large volumes of prompts — not even for large brands.

Measurement needs to be daily. Weekly or one-off tracking doesn't generate enough sample data to detect real patterns.

The Question That Stops Everyone When They Start With GEO

Imagine you run a tour company in Cancún. You've spent weeks hearing that you need to measure your visibility on ChatGPT and Gemini. You get it. You accept it. You open an AI monitoring tool and you're faced with the first screen: "Add the prompts you want to track."

And you freeze.

Because the question that comes to mind is completely legitimate: which prompt do I actually track?

"Tours in Cancún"? "Best excursions in Cancún"? "What tours to do in Cancún with kids"? "Comparison of tour companies in Cancún"? In American English, British English, or Australian? Formal or conversational?

A solo traveler from Germany doesn't ask the same way as a family of five from the UK. A millennial browsing Perplexity doesn't write the same as an executive asking Copilot between meetings.

The problem isn't that you don't know which prompt to pick. It's that the question has no correct answer. And even if it did, it would change every day.

Why the Literal Prompt Is the Wrong Unit of Measurement

We come from SEO, where the keyword was the atom of everything. You found the keyword, measured its volume, optimized for it. The system was deterministic: write well for that exact search term and you'd rank.

That model worked because Google did matching. It compared your page against the user's query and decided whether they aligned. Words mattered literally.

LLMs don't work that way.

A language model doesn't compare words. It interprets intent. It builds meaning from the full context of the question. And it generates a response that synthesizes multiple sources without necessarily using the user's exact words.

This has a direct consequence for measurement: the literal prompt a user writes is almost irrelevant to whether your brand appears or not. What matters is the intent behind the prompt. The thematic context that prompt belongs to.

The Tour Company Thought Experiment

Back to the Cancún example. Put ten different people in front of ChatGPT and ask them to search for a tour company. You'll get ten different prompts, guaranteed:

"What's the best tour company in Cancún?"

"Recommend some tours to do in Cancún"

"I'm flying to Cancún from London, which excursions are worth it?"

"Cancún tours reviews"

"What are the best snorkeling excursions in the Riviera Maya?"

"I want to visit Chichen Itza from Cancún, how do I organize it?"

None of them are the same. All of them belong to the same thematic context: organized tourism in Cancún. And all of them will generate responses where the same companies have a probability of appearing — or not.

If you track just one of those prompts, you have data about that prompt. You don't have data about your visibility.

The Solution: Stop Thinking in Prompts and Start Thinking in Contexts

The conceptual shift is this: what you need to map is not how users ask, but which thematic contexts you want your brand to be relevant in.

A thematic context is a semantic territory. For the tour company it would be something like: "purchase decision for organized excursions in the Mexican Caribbean." That territory includes dozens of possible prompts. But all of them activate the same space in the model.

When you measure by context instead of by literal prompt, you're measuring something real: the probability that your brand appears when someone has that intent, regardless of how they phrase it.

How Context-Based Measurement Works in Practice

Instead of defining an exact prompt, you define the thematic territory and build a representative set of prompts that covers it. You don't need to capture every possible variation or manage endless lists. If your contexts are clear, a well-defined set is more than enough — even for brands operating across multiple markets.

That means three things:

Semantic diversity. Prompts must cover different formulations of the same intent: direct questions, comparisons, informational searches, decision-stage queries.

Daily cadence. LLMs generate different responses at different moments. For the sample to be statistically representative, analysis needs to happen daily. With one-off or weekly measurements you don't accumulate enough volume: patterns don't emerge and any conclusion you draw could be noise from a single day.

Pattern analysis, not individual examples. A single ChatGPT result is anecdotal. The real signal appears when you analyze responses accumulated over time and detect which brands, which sources and which narrative appear consistently.

Which Metrics Actually Matter When You Measure by Context

Once the unit of measurement is the context and not the prompt, the metrics that matter change completely.

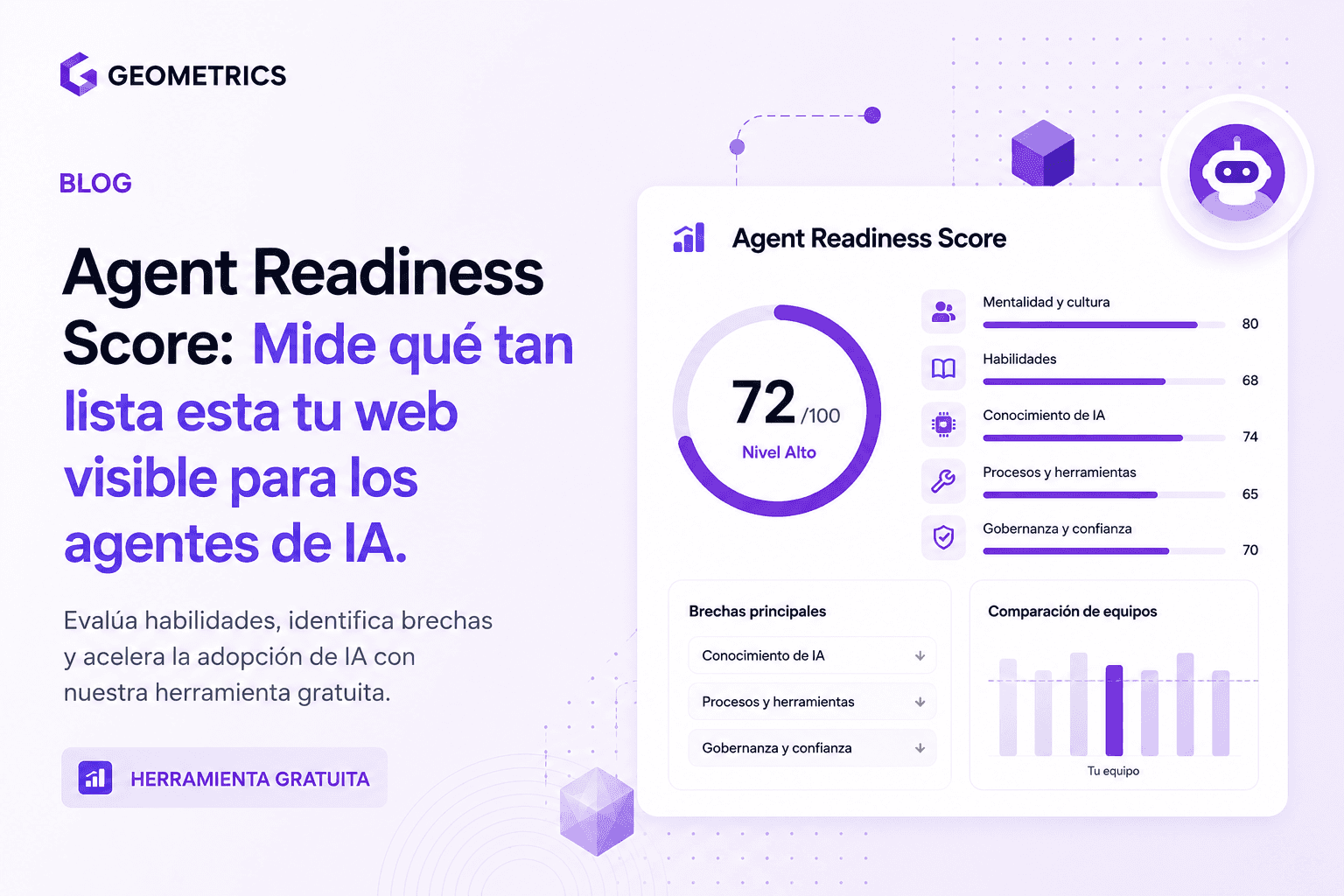

Share of Model (SoM)

The percentage of responses generated by an LLM — across your representative set of prompts — in which your brand is mentioned. It's the equivalent of Share of Voice, applied to the AI space.

For the tour company: if you appear in 23 out of every 100 responses ChatGPT generates for Cancún excursion queries, your Share of Model in that context is 23%. That's an actionable metric. Knowing "you didn't appear in this specific prompt today" is not.

Citation Frequency

How many times your domain appears as a referenced source in the responses. Being mentioned is one signal. Being cited as an authoritative source is a higher-quality signal: it means the model considers your content as the foundation of its response, not just a passing reference.

Narrative Built Around Your Brand

What story the AI is telling about your category and your positioning within it. Two companies with the same Share of Model can have radically different narratives: one appears as the leading reference, the other as a secondary option. That difference isn't captured by any isolated prompt.

Competitive Presence

Who is occupying the space that should be yours. Your Share of Model only has relative value: what matters is how much of the response share your direct competitor is taking in the same thematic context.

The Single-Session Data Mistake

There's a habit spreading rapidly that produces false conclusions: opening ChatGPT, typing your brand name, reading the response and drawing conclusions about your visibility.

That exercise measures nothing useful.

LLMs are probabilistic systems. The same question, on the same platform, in two different sessions, can generate different responses. The variations depend on the conversation context, the exact model version running at that moment and recent system updates.

Making strategic decisions about your visibility based on a one-off response is like judging a team's performance based on a random single game. You might be right by coincidence. But you don't have data.

Reliable measurement requires consistency: same set of contexts, daily cadence, pattern analysis across accumulated responses. Only then does the signal emerge from the noise.

Why This Is Especially Critical in English-Speaking Markets

The prompt problem doesn't disappear in English — it just looks different.

A user in the US searching for a tour company doesn't phrase it the same way as a user in the UK, Australia or Canada. Informal registers, regional vocabulary and platform-specific habits all affect how people phrase their queries to an AI.

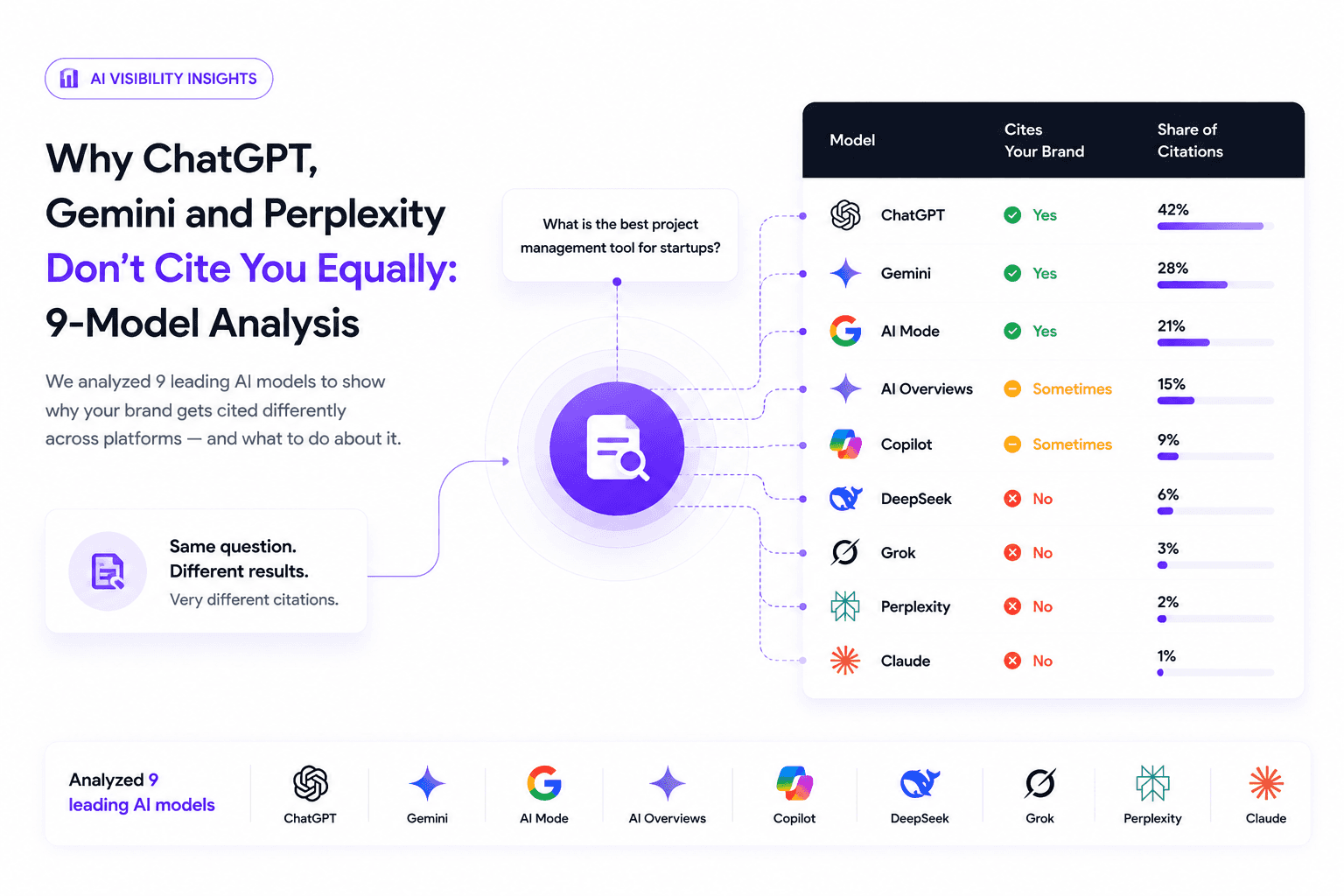

Add the fact that each AI platform has different citation biases, preferred sources and response patterns. Your Share of Model can be 30% on Perplexity and 8% on ChatGPT for the exact same thematic context. Measuring on a single platform gives you a partial picture at best.

Most monitoring tools were built to track a handful of manually entered prompts. That approach doesn't scale, doesn't account for semantic variation and doesn't produce the sample volume you need to make reliable decisions.

How GEO Metrics Solves the Prompt Problem

GEO Metrics starts from this diagnosis: tracking literal prompts doesn't scale and doesn't produce reliable data. The unit of measurement has to be the thematic context.

The platform builds representative prompt sets by context, analyzes them daily across ChatGPT, Gemini, Perplexity and Copilot, and measures Share of Model, citation frequency, brand narrative and competitive presence with the consistency required for patterns to be real.

The result isn't knowing what happened with one prompt today. It's knowing how your visibility evolves in the contexts that matter to your business, which sources the AI is using to build its responses about your category and what your competition is doing in that same space.

GEO Metrics doesn't tell you which prompt to write. It tells you whether you're winning or losing the semantic territory that matters to your business.

Frequently Asked Questions About Prompts, Contexts and AI Visibility

How many prompts do I need to measure my AI visibility reliably?

Fewer than you think, as long as your thematic contexts are clear. If you know which territories you want your brand to be relevant in, you don't need to cover every possible variation — you need prompts that are representative of each context. Even for large brands, a well-defined set per context is enough to produce reliable patterns. The problem isn't the number of prompts. It's not having the context defined before you start.

How often should I analyze my contexts to detect real changes?

The cadence needs to be daily. That's the only way to accumulate enough responses for the sample to be statistically representative. With weekly or one-off measurements you don't have sufficient volume: patterns don't emerge and any conclusion you draw could be noise from a single day. GEO Metrics analyzes contexts daily for exactly that reason — so the data you see reflects real trends, not random variation.

What's the difference between measuring on ChatGPT versus Gemini or Perplexity?

Each platform has different citation biases, preferred sources and distinct response patterns. Your Share of Model can be 30% on Perplexity and 8% on ChatGPT for the same thematic context. Measuring on a single platform gives you a partial picture. Real AI visibility is the aggregate of your presence across the response engines your audience actually uses.

What do I do if an AI mentions my brand with incorrect information?

This is what's known as brand hallucination: the model generates false information about your company with the same confident tone it uses for accurate information. The mitigation strategy involves publishing content with precise data, up-to-date statistics and verifiable sources that reduce the model's creative margin and anchor it to the real facts of your business.

Can I improve my AI visibility without changing my content strategy?

AI visibility depends heavily on which content the models index and which sources they consider authoritative. In most cases, improving Share of Model requires adjustments to your content strategy: greater semantic density on relevant topics, stronger perceived authority and presence in the sources LLMs prioritize for citations.

Want to see your real Share of Model on ChatGPT, Gemini and Perplexity for the contexts that matter to your business?

Request a demo at trygeometrics.com/contact and we'll show you the real data on your AI visibility — no theory, no random prompts.

GEO & AEO expert focused on making brands visible inside AI-generated answers. He leads GEO Metrics, measuring how models like ChatGPT and Gemini cite, rank, and describe brands. His work helps companies move from SEO rankings to true visibility in AI-driven search.

See more articles

Learn actionable strategies, proven workflows, and expert tips to help your brand thrive.

Subscribe to GEO Metrics newsletter!

Receive expert advice, updates, and smart analytical insights directly in your inbox.